It’s the third and final week of the Easter holidays, which makes it a perfect time to write a blog post! I think Easter is my favourite holiday for two reasons. First of all, since it stretches out for a relatively long duration, it’s possible to take the time out to “recharge your batteries” and check off some less important bits and bobs of your todo list (for me, this is backing up all the family photos onto the cloud!). Secondly, time off at Easter isn’t spent inside the shadow of impending exams, so revision isn’t so urgent.

My family and I went to Durham for a few days which was a great break. It’s a quiet, quaint and hilly place, which is an ideal place to forget about all things university related for a while! The hotel we stayed at had a gym and a fantastic pool, so I despite going on a hiatus from work, I didn’t have to take a break form exercise too!

Alas, all holidays have to end, and now it’s back to a mixture of coursework and dissertation writing. Thankfully, I’m working on a few cool things at the moment; in the AI & Games course, I’m implementing an AI to choose prices for goods to maximise the profit of a shop, in Introduction to Current Topics in Biology, I’m working on a short video describing how induced-pluripotent stem cells are being used to 3D print organs, and in Advanced Algorithms II, I’m working on implementing a program to solve systems of initial value problems (used to model things like chemical reactions and biological processes).

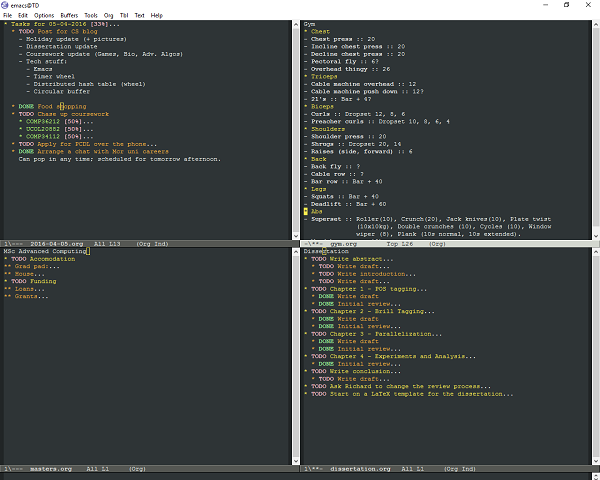

Outside of things strictly related to university work, I’ve begun the slow process of switching text editors from using Sublime to using Emacs. I had been tentatively using Vim for a while, but never really got over the super-steep learning curve that is learning the keyboard shortcuts. Emacs isn’t much better in terms of initial-usability, but the shortcuts make more sense to me, and I’ve been persuaded to give it a go by a number of blog posts (org-mode, emacs-latex) recently.

In my previous post, I said that I might talk about genetic algorithms in this post. However, I decided that if you want to learn about them, then you can take COMP36212. However, I came across two interesting blog posts with circle-related topics recently; one is about distributed hash tables (where the nodes in the hash table are arranged in a logical circle), and the other is about timing wheels (specifically approaches 4,5 and 6) which is a way to keep track of and fulfil timer requests (such as something like Thread.sleep() in Java). It’s not often that computer scientists get to use circular algorithms or datastructures in their work, in fact, we’re more likely to be actively avoiding situations where we have them (see cycle detection and circular dependencies).

However, circular buffers are an interesting topic I’d like to talk about. They solve the problem where you want to store some temporary data that you need to process but you only want to use so much memory to store the data.

A typical use case could be if you had something producing data such as a microphone, and something else consuming the data such as a voice processing app and you wanted to account for the situation where the voice processing app slowed down for some reason and couldn’t keep up with how much data was being produced by the microphone. In this situation, to avoid having to exceed your memory limits for the raw speech data, you need to either drop some old data from the buffer, or ignore some incoming data.

The first option is usually more useful, since old data is usually less important than new data, and this is the method implemented by a circular buffer. Wikipedia explains how a circular buffer works better than I would, but essentially, you have an array of data the same size as the amount of memory you want to use, and make the end of the array ‘wrap around’ to the start of the array if you try to add more data to the array than it can store, causing old entries to be overwritten by newer ones automatically if the buffer is full.

I got asked to implement a circular buffer in a coding interview once. I think it’s a good question, because the concept is easy enough for somebody who hasn’t met them before to grasp quickly, yet it’s easy to make silly mistakes such as off-by-one errors, so a candidate needs to work hard to convince themselves and the interviewer that their code works properly in all cases!